Evidence is key to informing effective practice in ecology and conservation. While the quality of this evidence depends on many factors, recent research from Christie et al. highlights the design of scientific studies to be one of the most important.

A version of this post is also available in Japanese.

Why study design?

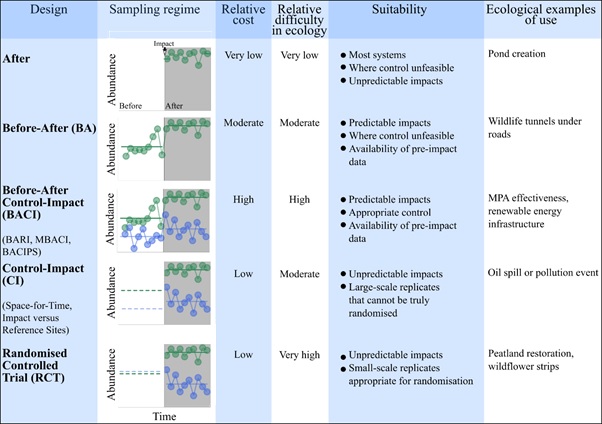

There are many ways to monitor or evaluate the impacts of a threat, or the effectiveness of our actions to mitigate such threats. For example, experimental approaches such as Randomised Controlled Trials (RCTs) are considered the gold standard for evidence in disciplines like medicine. However, these are often difficult to implement in ecology since truly randomising individual sites, plots or individuals to different treatments and controls can be impossible. Instead many ‘quasi-experimental’ approaches are used. These range considerably in their complexity (see table below). The most complex is a Before-After Control-Impact (BACI) design, which monitors impact and control groups both before and after an impact has occurred. Simpler designs include: Control-Impact (CI), which lacks pre-impact data; Before-After (BA), which lacks a control; and After, which lack pre-impact data and a control.

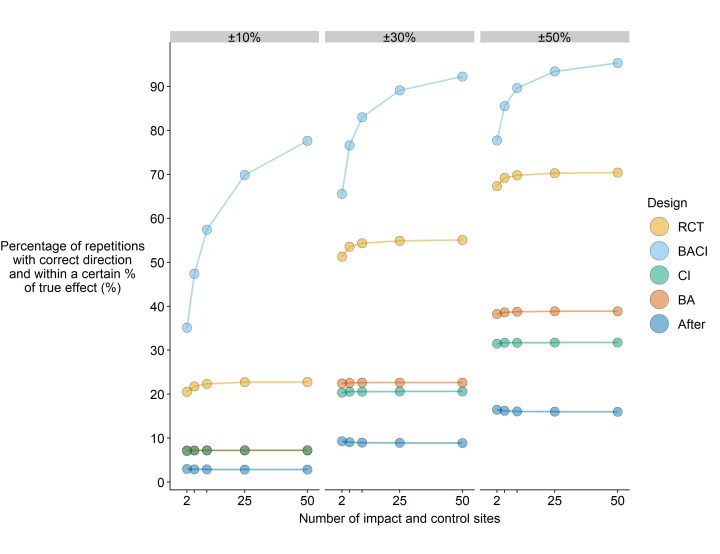

We know that studies using certain designs (e.g. RCTs or BACI) probably represent stronger evidence in ecology than simpler designs (e.g. CI, BA or After). However, directly comparisons of their accuracy on a quantitative scale are lacking. Such knowledge is extremely important because this could help decision makers and meta analysts better account for the quality of studies when assessing evidence.

What did we do?

To test the performance of different study designs we plugged empirically-derived estimates from 47 ecological datasets with BACI designs into a simulation. In the simulation, we compared each design’s ability to accurately estimate the impact of a hypothetical threat or management action on a virtual population (see below). Empirically-derived estimates helped us make the simulation as realistic as possible by helping us understand the likelihood and level of bias that affects each design in ecological situations. For example, without a control, BA designs will be biased by any changes in the control between pre- and post-impact. CI designs will be biased by any pre-impact differences that exist between impact and control groups. By extracting estimates for the change in control, change in impact and initial pre-impact differences, we could see how much each design is likely to suffer from bias.

Our results suggest that simpler study designs suffer from serious biases and that replicating such designs does not lead to improvements in accuracy. Only by using more robust designs that minimise these biases can we obtain more accurate estimates of impacts. For example, RCTs and BACI designs were several times more accurate than simpler designs. BA, CI and After designs even performed poorly at correctly estimating the direction of an impact (e.g. positive or negative).

Recommendations and tools

We argue that despite the use of more complex designs in ecology being challenging, we should still try to apply them at every opportunity. To encourage this, we need to address the barriers that prevent us from using robust designs – for example, short-term funding timescales so that we can carry out longer-term BACI studies. We also need to engrain impact evaluation more into conservation project planning, allowing us to collect data in a BACI design before we begin a management action.

Unfortunately, there is no guarantee that the quality of studies monitoring impacts is going to improve in ecology any time soon. In the meantime, we need to find better ways to account for study quality when using evidence to inform policy and practice. Usually meta analysts weight studies by inverse variance or sample size to partly account for this, but these weights can be flawed as studies with low variances or high sample sizes are not necessarily more accurate. Many meta-analyses dodge this issue by simply excluding studies with less robust study designs, but this often leads to a lack of evidence that may hamper decision making. We argue that some information treated with caution is better than no information at all.

We present a weighting scale in our article that can be used by meta analysts and evidence assessors to produce simple weights for studies based on just two pieces of information: study design and sample size. These can be plugged into meta analyses in exactly the same way as inverse variance weights, adjusting the overall result of meta analyses depending on the design and sample size of each study. Take a look at this simple tool that can help you generate these weights for yourself.

In our article, we also tested how these weights altered the results of several meta analyses. We found that our weights often resulted in fewer significant effect sizes than using inverse variance weighting – probably because many of these relied upon studies with less robust designs. Our weightings would be useful not only in future meta analyses, but also when assessing the strength of evidence in cases where meta analyses cannot be used (e.g. lack of studies or information to calculate effect sizes).

We hope our work has highlighted how important it is to use robust and accurate study designs to monitor and evaluate impacts in ecology. Failing to account for study designs in evidence assessment risks seriously misinforming managers and policymakers on the best ways to protect biodiversity. To sum up, design matters!

Read the open access article, Simple study designs in ecology produce inaccurate estimates of biodiversity responses, in Journal of Applied Ecology.

2 thoughts on “Study design matters in ecology”